Verisimilitude and Local Realism

Created/Modified: 2011-11-10/2019-02-07

I've been putting together a Python framework for simulating deterministic theories of two-photon correlations, and using de Raedt's model as a simple test case. I'd really like to eventually handle Joy Christian's model, but that's for another day.

For now, I want to understand what de Raedt's group is doing, and to that end have waded through half a dozen papers on the subject. As near as I can tell their photon-correlation model has nothing to do with deterministic learning machines (DLMs), which is too bad because they look kind of cool.

The idea is remarkably simple, at least if I'm interpreting their descriptions correctly. The problem of photon-photon correlations is easy to understand: if you send two correlated photons from a 0 => 0 transition through a pair of polarizing beam-splitters that have arms labeled "R" and "L" and an angle θ between their polarization axes, there is a correlation function defined by:

E(θ) = (Nsame-Ndifferent)/Ntotal

where:

- Nsame = events with the photons both detected in the same arm of their respective polarizing detectors (RR or LL events)

- Ndifferent = events with photons detected in different arms (RL or LR events)

- Ntotal = total number of events detected (that is, just the sum of the same and different counts).

In reality E(θ) = cos(2*θ). This is also the result predicted by quantum theory, much to everyone's surprise and delight.

Bell's argument shows that this functional form violates a constraint--Bell's Inequality--that must be obeyed by any theory that does not allow instantaneous communication between the (possibly very distant) polarizing detectors. Ergo, reality is "non-local"--it allows such instantaneous communication--since it violates Bell's Inequality. Fortunately we can only infer the existence of such non-local connectedness, not use it to send messages.

Bell's argument depends on some fairly simple assumptions, and one of these is that the detectors are unbiased: the probability of a photon being detected is independent of the polarizer angle. The de Raedt model involves a quite clever violation of this constraint, as follows:

To pair up photons--to identify them as members of the same correlated pair--we need to match their arrival times. Suppose therefore that there was a delay in the detector that depended on the polarizer orientation relative to the (objectively real) photon polarization, causing some photons to arrive later than they would otherwise. This would mean we failed to detect a paired event even though the detection efficiency for the photons considered individually would not change because we would detect every photon eventually. Just not quickly enough to tag it as being paired with another.

Our pair detection efficiency would then be a function of the angle between the polarizers, and as such, with an appropriately narrow time window and carefully crafted functional form for the angle-dependent delay, we can generate a fairly wide range of shapes for the correlation function E(θ), including the one that actually occurs. Because the photon polarization is the same at both detectors the assumed delay depends ultimately on the angle of the polarizers the resulting coincidence event frequencies also depend on the angles of the polarizers when averaged over the photon polarization and detector time jitter in the detectors. [edited for correctness]

It's quite clever really, although I'm pretty sure it doesn't describe reality for reasons described below.

As an aside, in poking around for background on why de Raedt's model is unlikely to describe reality, I came across this delightful review and recollection by Alain Aspect, whose fundamental work in realizing the experimental conditions for measuring quantum correlations was absolutely ground-breaking, and was what convinced me--a decided skeptic on the matter at the time--that reality was indeed non-local.

The important aspects of de Raedt's model is an extremely large variation in the maximum time of passage through the detection apparatus. This isn't actually a delay, but rather a variation in the maximum quasi-random time it takes for the photon to pass through the apparatus. It's possible that this can be modeled as a delay, although the scale wouldn't change, which is what matters.

The trick behind the model can be seen in the figure below: this is a plot of the difference in arrival time of the two photons as a function of the angle between the polarizing detectors.

For small angles, the photons are bunched up with nearly zero time difference between them. At angles close to 90 degrees the time differences are large, spread out over an envelope that has a sinusoidal shape.

The scale on the y-axis is in micro-seconds. The black line along the x-axis is actually two lines, separated by the 19 ns that is the actual time window used in the Aspect experiments.

For the de Raedt model to work, only a small fraction of the pairs that are created can be detected in the narrow time window that is required to pick out the photon pair distribution along the x-axis, which is the only region that has the right shape. The photon arrival times have to be smeared out over a much, much larger interval than the coincidence window, a fact that would be stunningly obvious to the most careless of experimenters, and I've never seen any evidence that the people who have done these kinds of experiments are anything less than meticulous.

With respect to Aspect's work, the random coincidence rate was monitored by looking at delayed-coincidence window. In de Raedt's model the coincidence rate in that window would vary dramatically, which is the sort of thing people do notice.

So while de Raedt's model is kind of cute, it isn't a description of experimental reality and it would be quite wrong to suggest otherwise, as it can be simply excluded by a fairly casual investigation of the existing rather large corpus of detailed results on experimental violations of Bell's Inequalities. The model certainly can't reproduce important aspects of experimental reality, such as the arrival time distribution and the unaccounted-for ratio between coincidence and singles counts, which will be much smaller in de Raedt's model than in reality. So no one would ever say this model can reproduce experimental reality.

I used de Raedt's model as a simple test case for a framework for evaluating local realistic models of two-photon correlation. It seems to me that any local realistic model that can reproduce experimental reality can be instantiated in this framework, and I hope people who are interested in this kind of thing use it. It's licensed under the GPL2.

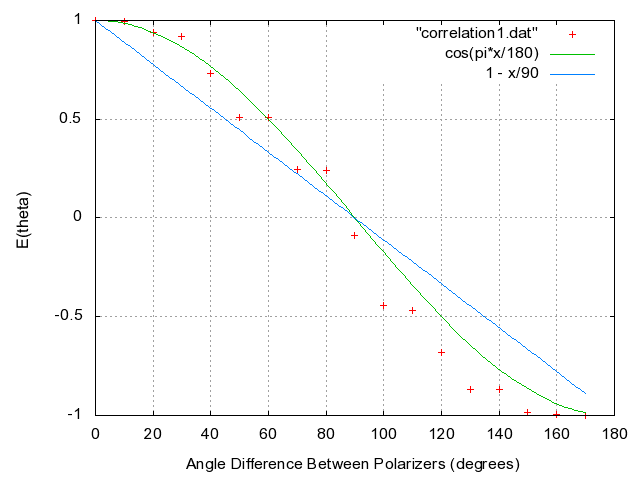

The kind of result the framework produces can be seen in the following figure, which is illustrative only--it doesn't actually represent the detailed physics particularly well.

The idea should be pretty clear however: the strength of the correlation function is driven by the density variation along the x-axis in the previous figure. The red points represent the tiny fraction of artificially-delayed pairs fall into the time window. In the case of Aspect's experiments, again: the time window was 19 ns, and using de Raedt's value of (time window)/(time spread) = 0.0025 this would mean pairs of spread over many micro-seconds.

This last point brings up a rather important logical lacuna in de Raedt's model: the model depends on matching photons with each other, which in his model is done first by the pair number, and then pairs are rejected on the basis of time difference if it falls outside the coincidence window.

But in the real experiment photons do not carry around pair-identification-labels. They must be matched up using their arrival times, and in de Raedt's model the random coincidence rate will be elevated by all those other time-spread photons arriving in the middle of things. Therefore an analysis that starts by assuming that all non-pair photons can be excluded as possible matches is not logically consistent given a theory that says arrival time is an extremely poor, and polarization-dependent, mechanism for matching photons in pairs.

This is why simulations should hew to the Principle of Verisimilitude to the greatest extent possible: the structure of the simulation, including the analysis, should be as strongly isomorphic with experimental reality as possible. In the case of co-incidence experiments and correlation experiments this generally means having separate processes to deal with separate detectors, and writing the data to file for later analysis by a process that may represent the external electronics.

Even in the case of the present framework I've only carried around the pair "count" identifier to allow me to reproduce de Raedt's analysis, which depends on it. It can also be useful as a debugging tool. But it should never be used in the analysis proper, which should only depend on the information one would actually have from an experimental run in the kind of apparatus one is simulating.

When the Principle of Versimilitude is employed, however, computational frameworks like this one can be extremely useful in evaluating theoretical claims, particularly in cases like this one where we already have a large body of data that tightly constrains the acceptable outcome of simulation results using any alternative model.